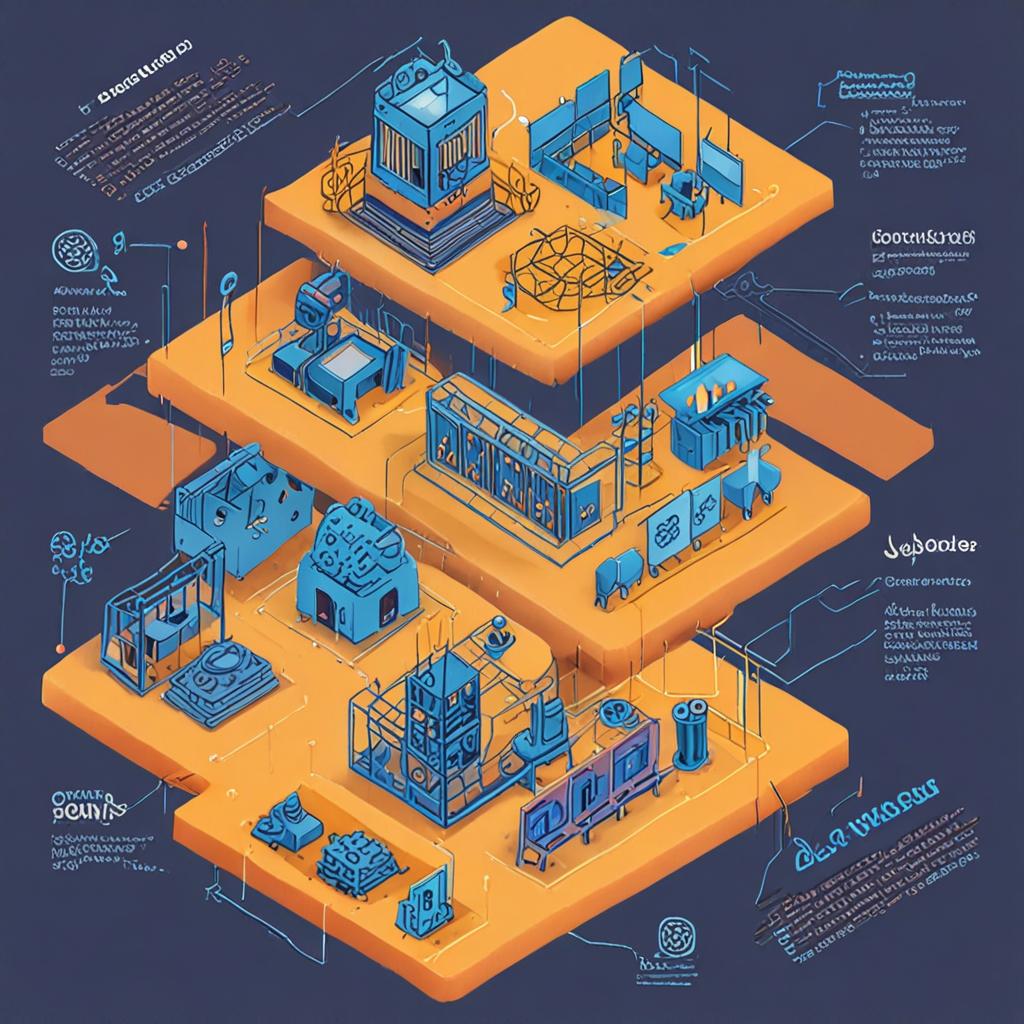

LLMOps Best Practices 2024: From Prototype to Production-Grade Systems

A practitioner's guide to the LLMOps best practices that separate fragile demos from reliable production systems: prompt versioning, observability, evaluation, and cost governance.